![]()

| ABOUT DV | CONTACT | SUBMISSIONS |

Improvisation From The

Proscenium

The Matter of Mind, Myth, and Metaphor

(Part Three of Three)

by Harold

Williamson

www.dissidentvoice.org

May 31, 2005

![]()

* Read

Part One

* Read

Part Two

|

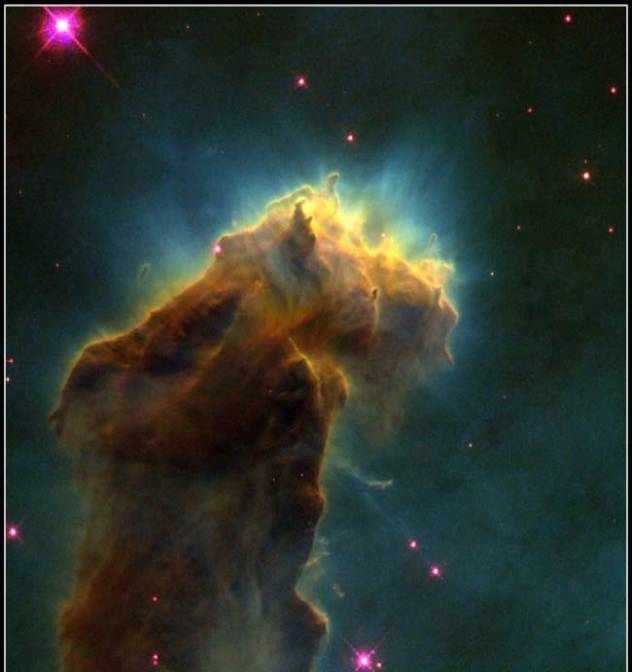

This picture was taken on April 1, 1995, with the Hubble Space Telescope. This eerie structure is made of molecular hydrogen gas and dust that is an incubator for new stars. It is 7,000 light-years away, and each “fingertip” is larger than our solar system. Credit: Jeff Hester and Paul Scowen (Arizona State University), and NASA. |

“Why should we not all live in peace and harmony? We look up at the same stars, we are fellow-passengers on the same planet and dwell beneath the same sky. What matters it along which road each individual endeavors to find the ultimate truth? The riddle of existence is too great that there should be only one road leading to an answer.”

-- Quintus Aurelius Symmachus

Philosophy has a warning device called “paradox”, and it calls attention to mistaken assumptions about how something works in the “real” world even though there is no mistake in the logic. It is particularly exemplified by human bias in experimental results, and this “expectancy” can be demonstrated mathematically. When this occurs, an assumption about the “real” world must be changed -- not the logic. This was not a problem with Classical or Aristotelian logic that held sway until the 19th century, because it was only concerned with the formal properties of an argument and not its “factual” accuracy. We now use a symbolic logic that supplants ordinary language with mathematical symbols, and from this theorists build models of the “real” universe. When faced with paradox, such as infinite energies or negative probabilities, we are lost in a labyrinth where we must reconcile our senses (“matters of fact”) with our logic (“truths of reason”) to guide us to the “real” world -- wherever that is. But a compass is useless without a map, and if we are not certain where we are in relation to where we are going, how do we resolve this?

Karl Popper, the noted 20th-century Anglo-Austrian philosopher contended that the only basis for “progress” in science is the objective reproducibility of experimental results. He did indeed have a point. But the science that he had so faithfully championed with his logically simple yet very complex methodology has dispossessed him of the very objectivity that he guarded so tenaciously. Although “progress” is a concept that is ambiguous in meaning and application, Popper argued that science could make “progress” only by avoiding idea-dependent explanations of experimental results. He contended that it is necessary to assume universal physical laws and supplement them with initial conditions. But since this is reminiscent of the cumbersome cycles and epicycles that were created to explain the incorrect worldview of the Ptolemaic system that Galileo so effectively demolished with his telescope, it is necessary to temper this approach with “Ockham’s razor” -- attributed to William of Ockham, the 14th-century English scholastic philosopher who rejected the idea of universal concepts and was charged with heresy in 1324 by Pope John XXII. Simply stated, it is vain to do with more what can be equally accomplished with less. Science continues to use this principle a fortiori by preferring the simplest of competing theories. But this assumes that nature does indeed work this way, and this is a big assumption.

The idea of a theory of knowledge based on an understanding of human mental processes can be attributed to the 17th-century English philosopher John Locke. This observation from his Dedicatory Epistle to An Essay Concerning Human Understanding (1690) is incisive: “New opinions are always suspected, and usually opposed, without any other reason but because they are not already common.” Likewise, science first attempts to explain unknown phenomena in terms of what is already “known”, and this supposedly separates viable scientific theories from mere speculation. But actually this is nothing more than the very same preference for old uncertainties over new ones; and the more comprehensive a new theory is, the more likely it will initially face considerable opposition by orthodox science.

The problem is that a theory is not necessarily wrong because it can be ruled out by any of these methods. An important example is Einstein’s Theory of General Relativity. Instead of adding to the classical physics of Newtonian mechanics, Einstein’s theory engulfed it within a revolutionary new way of perceiving the universe. If Einstein had continued to wander with the multitude in the wilderness for forty years without an idea-dependent direction to guide him, we would be no closer to the “Promised Land” than we were in the 17th century -- although we can’t be certain we are heading in the right “direction” now. In spite of the dangers inherent in the use of metaphors on all counts here, the Exodus does provide a graphic analogy of the journey that underlies the idea of “progress” in the sciences; because there can be no “progress” without a direction or destination in mind. Science follows its reason as far as it will allow, and to believe otherwise would mean that science has been able to find its way because it never had any idea where it was going. Some would not only agree that indeed this is what has happened, but also that this is preferable. The noted philosopher Paul Feyerabend has advocated an approach that is against the specific use of a rational scientific method, and he believes that a rational scientific method is not only counterproductive but also impossible to achieve.

Popper passionately attacked historical proofs as not being “falsifiable” and therefore “unscientific”, and they are in this sense. However, science has been successful in condensing experiences of phenomena into manageable forms with the use of inductive generalizations, or what are popularly known as “laws of nature.” But as the 19th-century author Alexandre Dumas fils quipped, “All generalizations are dangerous, even this one.” I continue this thought by saying that all generalizations cloak a negative hypothesis -- viz. “This law has no exceptions.” As it has been said that a new discovery of one ugly fact can ruin a beautiful old theory, it is not possible to “scientifically” prove that a negative hypothesis is true in a finite universe, let alone in a universe that may be infinitely large. Only “historical” proofs will work -- meaning that to date no evidence to the contrary has yet been discovered. In the case of an infinite universe, proof requires the logical impossibility of performing an infinite number of actions in a finite amount of time. So in either case it is necessary to have faith that somewhere under the mattress there are no hidden peas that will disturb our fragile dreams.

We do not know if the universe is infinite, but we can say that science is a method that approximates a philosophical supertask by searching for exact knowledge in every possible time and place in a universe that is indeed gargantuan. Although it is not necessary to physically travel to all corners of the universe in order to understand it, perceptions become increasingly abstract as the observer becomes further removed in time and space from concrete existence. Most of what is known about the composition, structure, and evolution of the universe has been deduced from the study of light being emitted from distant objects. According to NASA, the most distant galaxy seen by the Hubble Space Telescope could be as far away as 13,000 million light-years. This was deduced from light that only a moment ago arrived from when the universe was less than one tenth its current estimated age of 14,000 million years. But consider that if the sun were a grain of salt, the Milky Way Galaxy with its estimated 200,000 million stars would represent a 70-ton pile of salt with each grain being over 7 miles apart. Since the universe contains some 100,000 million such galaxies, its enormousness is overwhelming to the imagination. The distance from Earth to the star Proxima Centauri is 4.3 light-years, over 25 million million miles. But if it started the journey today, the space shuttle could not reach this nearest star to our solar system within the next 25,000 years. Now consider its randomness and complexity and the brief time that we have been searching for answers, and science is far from an ultimate understanding of the universe . . . perhaps far from even asking the right question.

In an attempt to understand our world, highly specialized science has charted a course of reverse engineering, or reductionism. Although it has been provisionally useful, this charter has mandated that the complex “whole” be explained in terms of the behavior of its simpler component parts. In cosmology there is uncertainty about what that “whole” is. Yet we continue to rely on inductive logic to arrive at generalizations about specific things, with the “whole” being what we imagine within our perceptions of what is real. This is akin to dismantling the Sistine Chapel and then trying to understand its significance by performing solemn philosophical liturgies over the piles of rubble. Our technology has enabled our finding new things that are smaller and farther away; but it is perhaps not so ironic that the closer we are able to look, the less certain everything becomes. By predicating an understanding of the “whole” on the examination of its parts, we forgo opportunities of knowing things that by their very nature transcend disassembly. For instance, we have yet to learn how to explain the “emergence” of phenomena that come into existence as things become more complex -- particularly in life sciences. Consciousness does not exist at the cellular level, and life does not exist at the molecular level, and so forth. So just how relevant are narrowly focused scientific observations and theories to an understanding of the universe as a whole? According to Pierre Duhem, an early 20th-century French philosopher of science, it is a mistake to assume that scientific theories tell us anything about reality, let alone about the entire universe.

Even with all this anthropocentric high-mindedness, we have yet to understand even basic things that are accepted as a priori knowledge. What we call “life”, for instance, has only popular meaning. Ever since Aristotle, the Western worldview has included “life” as being something more than merely organized “matter”. The “mind” is a collective term that includes various forms of consciousness. Yet there is no clear consensus of what consciousness is, let alone how it works. We do not understand “matter” well enough to build a complete model of our universe, and it may be irreducible (it cannot be made any simpler than it actually is). “Something” is missing that unifies the forces of the particle world with the forces of the world in which the brain perceives reality, and we don’t know what that “something” is. Science refers to it in general terms as the “theory of everything,” or Grand Unified Theory (GUT). It may be a strange field or particle (Higgs boson), a Platonic mathematical entity (137 or the inverse of the square of alpha, the fine structure constant), a Pythagorean harmony of the cosmos (a “superstring” symphony -- classical, of course!), or a transcendent archetype (God). Pick any metaphor you like, no one knows.

In a postmodern world, it can be argued that science is involved in a tacit search for God. Can agnosticism, then, be a valid approach to theology? Since this method must begin with a hypothesis that is only testable in terms of material phenomena, applying agnosticism to theology is a mistake. But denying altogether that God exists requires the untenable position of proving a negative hypothesis involving transcendental beliefs, which is neither good philosophy nor good science. The cosmological first cause theory supports the deist philosophy (God created the universe and then stepped aside). Arguably it is the least controversial because it is free of inherent contradictions and does not violate accepted principles of logic, but even the deist philosophy leaves open the question of God’s whereabouts and form. Since this most fundamental question about the nature of God cannot be explained in terms of material phenomena, Kant is correct with his view of God being unexplainable in this context. But is anything explainable in this context when the very existence of an absolute physical basis for phenomenal reality is controvertible in a universe that is both indeterminate (governed by probabilities) and immaterial (mental constructs of quantum events)? It seems that agnosticism is no more at variance with theology than it is with itself.

We improvise our own truths at will, but not our own history. The 19th-century German social philosopher Karl Marx said, “Men make their own history, but they do not make it just as they please….” Descartes, Galileo, and Newton courageously worked to include mankind in the metaphysics of medieval scholasticism, but ironically they accomplished the exact opposite. They constructed an eternal mechanical universe that followed inexorable laws of cause and effect that firmly established the events of the past, present, and future as having been determined from the very beginning, thus making free will an illusion. Only with the establishment of the science of quantum physics was it possible to accomplish what these men set out to do; but in the process, mankind lost all prospects of finding objective knowledge in a universe that is an illusion. We had become accustomed to a simple view of our perceptions, and things were either present or absent regardless of whether they were seen or not. Even though it was impersonal, our world was solid and real. But quantum physics, the very foundation of our science, says that this simple view is not true. Again we must reconcile our senses with our reason. Are we back to where we started, or did we not ever leave?

At the beginning of the 20th century it was difficult to obtain any scientific information, but at the beginning of the 21st century the difficulty now lies in how to react to an unprecedented accumulation of highly specialized scientific information. Since much of the world population is not literate in the sciences and embraces a more apparent mystical worldview, a major cultural imbalance can result if an elitist parochialism is allowed to develop as it has for most human endeavor. Means often become ends-in-themselves accompanied by disdain for other philosophies that are attempts to reach the same ends. There is a danger of science becoming technology for its own sake with functionaries doing experiments simply because they can be performed, without regard to whether they should be performed. Today there is an increasing dependence on complex technologies controlled by multinational corporations that are still consolidating their power, and the consequences of these new technologies will depend on how they are used. For example, there are segments of the human genome that profoundly influence the development of the “mind” and body of each of us, and some are corporate property protected by patents. The consequences of how this technology is used will probably be much more than revolutionary; it will be evolutionary.

But at this point in time we do not know much about the overall scheme of things in biological terms either. A study by the Institute of Genomic Research reported that among the 300 or so different genes that are necessary to keep the simple bacterium alive, scientists have no idea what a third of them do. This is far from understanding the 100 million million specialized cells of the complex human organism that contain at least a hundred times as many different genes. In addition, Richard Lewontin, an eminent biologist at Harvard University, asserts that no organism can be “computed” from the information in its genes. He maintains that the use of the word compute as a metaphor to describe the role of genes is bad biology because it implies that there is an internal self-sufficiency of DNA, and an organism is the unique result of a process that includes the sequence of environments in which it develops. It gets even more complicated than this, because recent experiments have shown that variations in symmetry within the same environment are caused by random events at the molecular level.

It is

not certain to what degree our sapience -- at least that which we

emphatically define ourselves taxonomically as Homo sapiens sapiens

-- is genetically coded. But let’s take a giant leap of faith and assume

that we can capture the spirit of Daedalus by using applied genetics to

redesign ourselves to be on a par with our technology before we blast into

the cosmos. Will we inadvertently assume the spirit of Icarus as well, and

suffer the same fate? So far, our medical strategy has been to minimize

elements that work “against” us in natural selection, even though these

very elements regulate our population within a perilously balanced

biosphere. We have yet to cure all ills, the Fountain of Youth has not

been found, and both victim and survivor can rest assured that they are

not to be blamed for whatever happens in this regard. But when this is no

longer the case, what will happen to society? Today, some look upon

China’s policy of one child per couple as totalitarian; and others look

upon birth control, abortion, and euthanasia as immoral and

unethical. Americans are currently faced with the political issue of

permitting research in the United States on human embryonic stem cells

that may hold the key to treating a wide range of human diseases. Yet this

is mere child’s play compared to what “we” will be faced with in a “brave

new world” of our own design. But the role of God cannot be assumed

without losing our humanness, so we does not mean us

in this new theater of the absurd.

To-morrow, and to-morrow, and to-morrow,

Creeps in this petty pace from day to day,

To the last syllable of recorded time;

And all our yesterdays have lighted fools

The way to a dusty death. Out, out, brief candle!

Life’s but a walking shadow; a poor player,

That struts and frets his hour upon the stage,

And then is heard no more…

-- William Shakespeare from Macbeth (V, v, 19)

* Read The Epilogue to this series.

Harold Williamson is a Chicago-based independent scholar. He can be reached at: h_wmson@yahoo.com. Copyright © 2005, Harold Williamson

Other Articles by Harold

Williamson

*

Amnesty

International: US Monkeying With Human Rights ![]()

![]()

![]()

*

Improvisation From The Proscenium, Part Two

* Did

Newsweek Damage America's Image?

*

Improvisation From The Proscenium, Part One

*

Watching George Bush Trying to Pull a Rabbit Out of His Hat

*

Shooting the Messenger Who Reported Human Rights Abuses in Afghanistan

* Agent

Orange -- Thirty Years After

* Truth

in Humor

*

Redefining America

* The

Missing WMD: Bush's Red Herring

* The

Darkness in America

*

Spinning The Vietnam War: What Goes Around Comes Around

* None

Dare Call It Murder

* It

Isn't God Who is Crazy

* Don't

Trust Anybody Over Thirty

*

Faith in the Postmodern World

*

Remember Who The Enemy Is

*

Obscenity, A Sign of the Times and the Post

*

Thinking Anew: A Do-It-Yourself Project

*

America's Blind Faith in Government

* Think

Tanks and the Brainwashing of America

* Bully

for the Bush Doctrine: A Natural History Perspective